Key Takeaways

- Fun-CosyVoice3.5 is Alibaba Tongyi’s upgraded multilingual voice cloning model with FreeStyle instruction control across 13 languages.

- Fun-AudioGen-VD generates complete auditory scenes—combining custom voice design with immersive environmental audio.

- Both models use natural language commands instead of rigid preset tags, making professional voice synthesis accessible without technical expertise.

- First-packet latency reduced by 35%; rare character mispronunciation rate cut from 15.2% to 5.3%.

- Available via Alibaba Cloud’s DashScope API (cosyvoice-v3.5-plus and cosyvoice-v3.5-flash).

Table of Contents

What Are Fun-CosyVoice3.5 and Fun-AudioGen-VD?

Fun-CosyVoice3.5 and Fun-AudioGen-VD are two AI speech models released by Alibaba’s Tongyi Lab on March 2, 2026, both built around a “FreeStyle” instruction paradigm that lets users control voice output through plain-text descriptions instead of fixed parameter menus.

Traditional TTS systems force users to pick from dropdown menus—preset emotions, rigid style tags, limited tone options. These two models break that pattern entirely. You describe what you want in everyday language, and the model delivers.

They share the same core philosophy but serve different purposes:

- Fun-CosyVoice3.5 — focused on voice cloning and expressive speech control

- Fun-AudioGen-VD — focused on voice design and full-scene audio generation

Why the “FreeStyle” Approach Changes Everything

The fundamental problem with earlier voice synthesis tools was control rigidity. Users were constrained to a fixed set of emotion labels (“happy,” “sad,” “neutral”) with no way to express nuanced instructions like “sound calm on the surface but slightly tense underneath.”

FreeStyle removes that ceiling. Instead of selecting tags, you write instructions:

“Lower the pitch slightly, slow the pace, and add a hint of fatigue.”

The model interprets that sentence and renders it. This single shift moves voice generation from a configuration task into a creative task—lowering the skill floor while raising the quality ceiling.

Fun-CosyVoice3.5: Deep Dive

What Does Fun-CosyVoice3.5 Do?

Fun-CosyVoice3.5 is a multilingual voice cloning and expressive TTS model. It takes a reference audio sample (10–20 seconds is sufficient) and replicates that voice with high fidelity, then lets you steer delivery through natural language prompts.

Core Capabilities

FreeStyle Instruct-TTS

You describe the tone and delivery in a single sentence. Examples from Alibaba’s documentation:

- “Simulate a navigation assistant’s cheerful arrival message—light tone, a sense of journey completed.”

- “Simulate a Cantonese news journalist asking a guest a question—clear, steady, authoritative.”

The model handles both the voice replication and the expressive layering in one pass.

Multilingual Support — Now 13 Languages

Version 3.5 adds Thai, Indonesian, Portuguese, and Vietnamese to the existing lineup. Full language support now covers:

- Chinese (Mandarin + 16 regional dialects including Cantonese, Shanghainese, Sichuan)

- English, French, German, Japanese, Korean, Russian

- Portuguese, Thai, Indonesian, Vietnamese

Across all 13 languages, Alibaba claims industry-leading scores on Word Error Rate (WER) and Speaker Similarity (SpkSim) benchmarks.

Dramatically Improved Pronunciation Accuracy

The model was specifically optimized for rare characters, classical Chinese text, and complex sentence structures. The result:

| Metric | Before | After |

| Rare character error rate | 15.2% | 5.3% |

| Long-form stability | Inconsistent | Significantly improved |

This matters for content creators reading academic papers, legal documents, classical literature, or technical manuals.

Better Naturalness via Reinforcement Learning

Tongyi Lab used two RL-based fine-tuning methods:

- DiffRO + GRPO on the language model layer — improves rhythm and prosody with multi-channel duration rewards

- Flow-GRPO on the audio generation layer — improves voice similarity and audio quality

The result is speech that sounds more layered and human, rather than flat or robotic.

Performance Improvements

| Metric | Improvement |

| Tokenizer frame rate | Halved |

| First-packet latency | Reduced by 35% |

These changes matter for real-time applications—live streaming, customer service bots, interactive voice agents—where delays break the experience.

How to Use Fun-CosyVoice3.5 via API

The model is available through Alibaba Cloud’s DashScope SDK. Here’s a minimal Python example to clone a voice:

| from dashscope.audio.tts_v2 import VoiceEnrollmentService service = VoiceEnrollmentService() voice_id = service.create_voice( target_model=’cosyvoice-v3.5-plus’, prefix=’myvoice’, url=’https://your-audio-file-url’, language_hints=[‘zh’] # or ‘en’, ‘pt’, ‘th’, ‘id’, ‘vi’, etc.) print(f”Voice ID: {voice_id}”) |

Key parameters to know:

- target_model — must match the model used in your synthesis call later

- prefix — alphanumeric label (max 10 characters) for your voice ID

- url — public URL to your reference audio (10–20 seconds, clear, minimal noise)

- language_hints — helps the model identify the source audio language for better cloning

Voice quota: Up to 1,000 custom voices per account. Voices unused for 12 months are auto-deleted. Creating and managing voices is free; synthesis is billed per character.

Common troubleshooting tips:

- Use WAV over MP3 for source audio (avoids lossy compression artifacts)

- Keep speech continuous — avoid gaps longer than 2 seconds

- Ensure at least 60% of the audio clip is active speech

- Recommended sample rate: 16kHz or higher, mono channel

Fun-AudioGen-VD: Deep Dive

What Does Fun-AudioGen-VD Do?

Fun-AudioGen-VD is Alibaba’s scene-based audio generation model. Where Fun-CosyVoice3.5 clones and refines existing voices, Fun-AudioGen-VD creates voices from scratch based on text descriptions—and wraps them in fully designed acoustic environments.

Think of it as the difference between a voice actor (CosyVoice3.5) and a full production studio (AudioGen-VD).

Controllable Voice Design

You can specify every dimension of a voice without recording a single second of audio:

Basic attributes:

- Gender, age, accent, pitch, speech rate

Timbral qualities:

- Husky, bright, deep, magnetic, breathy

Emotional states:

- Anger, sadness, excitement, determination, anxiety

Role simulation:

- Customer service agent, military veteran, child, AI assistant, news broadcaster

Complex psychological states:

- “Calm on the surface but trembling inside”

- “Confident but hiding exhaustion”

Example instruction used by Tongyi Lab:

“Character: deranged villain. Acoustic style: sinister and erratic. Voice: shrill. Requirement: pitch spikes mid-sentence unpredictably, with irregular swallowing sounds and dismissive laughter, full of arrogance and psychological distortion.”

The model generates a voice that fits that description without any reference audio needed.

Immersive Scene Audio Generation

Fun-AudioGen-VD doesn’t stop at voice. It builds the sonic environment around it:

Background environments:

- Urban street noise, café ambiance, battlefield explosions, forest sounds

Spatial reverb effects:

- Cathedral acoustics, metal prison cells, underwater echo, small room reverb

Device-style filters:

- Vintage radio crackle, walkie-talkie compression, breathing mask muffling

Dynamic environmental interactions:

- Wind noise that fluctuates, echoes that shift with distance, progressive hoarseness

Example instruction:

“Scene: a busy café. Background: coffee grinder hum, clink of ceramic cups, distant murmur of conversations. Speaker tone: relaxed, like chatting over afternoon tea.”

The output isn’t just the voice—it’s the entire acoustic scene baked in.

Primary Use Cases for Fun-AudioGen-VD

- Game development — Generate NPC voices and ambient audio from text descriptions, no recording studio needed

- Film and animation — Rapidly prototype character voices and scene audio before final production

- Audiobooks and podcasts — Create unique voice identities for different characters without hiring multiple voice actors

- Advertising — Design brand voices from scratch with precise timbral and emotional specifications

- Training data generation — Produce high-quality reference audio for other voice cloning pipelines

Fun-CosyVoice3.5 vs. Fun-AudioGen-VD: Which One Do You Need?

| Need | Use This |

| Clone a real person’s voice | Fun-CosyVoice3.5 |

| Control how an existing voice is delivered | Fun-CosyVoice3.5 |

| Create a completely new voice from a description | Fun-AudioGen-VD |

| Generate a voice + environmental audio together | Fun-AudioGen-VD |

| Multilingual content production | Fun-CosyVoice3.5 |

| Game/film character audio | Fun-AudioGen-VD |

| Real-time applications (low latency required) | Fun-CosyVoice3.5 |

The models are designed to complement each other. Fun-AudioGen-VD can generate high-quality reference audio that Fun-CosyVoice3.5 can then clone and deploy at scale.

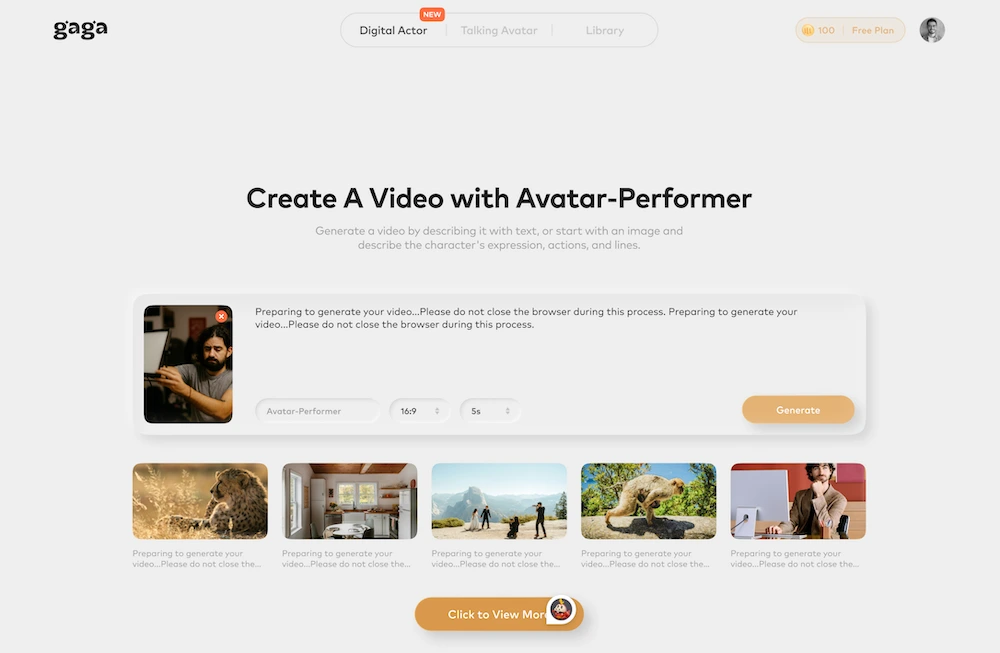

BONUS: Gaga AI — Taking AI Voice Into AI Video

If Fun-CosyVoice3.5 and Fun-AudioGen-VD handle the audio layer, Gaga AI tackles the full multimedia production stack—combining AI-generated video, voice cloning, and avatar creation into one platform.

What Is Gaga AI?

Gaga AI is an AI-powered content creation platform built around the Gaga-1 model, which fuses video generation with synchronized audio—voice, music, and ambient sound—in a single generation pass.

Key Features

Image to Video AI

Upload a static image and Gaga-1 animates it into a coherent video clip. The model understands scene context, lighting, and subject motion, producing smooth, realistic output without manual keyframing.

Video and Audio Infusion (Gaga-1 Model)

The Gaga-1 model’s core innovation is the simultaneous generation of video and its acoustic environment. Rather than generating silent video and adding audio in post-production, Gaga-1 produces both in sync—dialogue, background noise, and sound effects all aligned to the visual action.

AI Avatar

Create a photorealistic or stylized digital avatar that speaks, moves, and emotes. Useful for:

- Corporate training videos without on-camera talent

- Multilingual content (swap voice and lip-sync language)

- Brand mascots and virtual presenters

AI Voice Clone

Gaga AI includes a voice cloning layer that works alongside (or independently from) its video generation pipeline. Record a short sample, and the platform replicates that voice for use across all generated content—consistent brand voice at scale.

Text-to-Speech (TTS)

A built-in TTS engine handles script-to-voice generation for avatars and video narration, with style and emotion controls that mirror the FreeStyle paradigm seen in Alibaba’s models.

Why Gaga AI + Fun-CosyVoice3.5 Is a Powerful Combination

Use Fun-CosyVoice3.5 or Fun-AudioGen-VD to design or clone your ideal voice with precision. Export that audio and feed it into Gaga AI’s video pipeline to create avatar-driven video content with that exact voice, fully synced and animated.

This workflow bridges the gap between audio perfection and visual production—giving creators a complete, AI-driven content pipeline from script to finished video.

FAQ: Fun-CosyVoice3.5 and Fun-AudioGen-VD

What is Fun-CosyVoice3.5?

Fun-CosyVoice3.5 is a multilingual voice cloning and expressive TTS model from Alibaba’s Tongyi Lab. It supports 13 languages and allows users to control speech delivery using plain-text instructions rather than preset tags.

What is Fun-AudioGen-VD?

Fun-AudioGen-VD is Alibaba’s scene-based audio generation model. It creates custom voices from text descriptions and generates full acoustic environments—background noise, reverb, device filters—alongside the voice.

How is FreeStyle instruction control different from standard TTS?

Standard TTS uses fixed labels like “happy” or “neutral.” FreeStyle lets you write any natural language description—”sound tired but trying to hide it”—and the model interprets and renders it. No preset menu required.

What languages does Fun-CosyVoice3.5 support?

13 languages: Chinese (Mandarin + 16 dialects), English, French, German, Japanese, Korean, Russian, Portuguese, Thai, Indonesian, and Vietnamese.

How much audio do I need to clone a voice with Fun-CosyVoice3.5?

10 to 20 seconds of clear audio is sufficient. Longer isn’t necessarily better—quality matters more than duration.

Can Fun-AudioGen-VD create voices without a reference recording?

Yes. That’s its primary use case. You describe the voice you want in text—age, gender, accent, emotion, timbre—and the model generates it from scratch.

Is Fun-CosyVoice3.5 available outside China?

The cosyvoice-v3.5-plus and cosyvoice-v3.5-flash models are currently only available in Alibaba Cloud’s China mainland deployment (Beijing region). For international regions (Singapore), use cosyvoice-v3-plus or cosyvoice-v3-flash.

How do I access these models?

Through Alibaba Cloud’s DashScope API and SDK. Documentation is available at https://help.aliyun.com/zh/model-studio/text-to-speech and the cloning API reference at https://help.aliyun.com/zh/model-studio/cosyvoice-clone-api.

What’s the cost structure?

Creating, querying, updating, and deleting custom voices is free. Speech synthesis using cloned voices is billed per character of text synthesized.

What audio quality does the source recording need to be?

Recommended: WAV format, 16kHz+ sample rate, mono channel, no background noise, no gaps longer than 2 seconds, at least 60% active speech content.

Can I use Fun-AudioGen-VD for game audio?

Yes—it’s one of the primary intended use cases. You can generate character voices, ambient soundscapes, and environmental audio from text descriptions, significantly reducing production time and recording costs.

What’s the voice quota per account?

1,000 custom voices maximum. Voices that go unused for 12 months are automatically deleted to free quota.