Key Takeaways

- GLM-5-Turbo is a dedicated fast-inference model by Z.ai (Zhipu AI), built on the GLM-5 foundation and optimized specifically for agent-driven workflows like OpenClaw.

- It delivers real-time streaming, structured outputs, and long-chain task execution—all at significantly lower cost than closed-source alternatives.

- GLM-5 (the base model) has 744B parameters (40B active), a 200K token context window, and achieves open-source SOTA on SWE-bench, BrowseComp, and Terminal-Bench 2.0.

- GLM-5-Turbo is available via the Z.ai API, OpenRouter, and can be integrated into OpenClaw, Claude Code, and other agent frameworks in minutes.

- Bonus: Gaga AI is a powerful AI video creation platform that pairs well with AI-powered workflows—offering image-to-video, AI avatars, voice cloning, and TTS in one place.

Table of Contents

What Is GLM-5-Turbo?

GLM-5-Turbo is Z.ai’s speed-optimized variant of GLM-5, designed for fast inference and strong performance in real-world agent environments. While GLM-5 is the flagship foundation model, GLM-5-Turbo is fine-tuned specifically for agent ecosystems—most notably the OpenClaw framework—making it the go-to choice when you need snappy, reliable responses across complex automated workflows.

Launched in March 2026, GLM-5-Turbo generated immediate market attention: Zhipu AI’s Hong Kong-listed shares surged as much as 16% on announcement day alone.

It’s not just a faster version of GLM-5. It’s been re-trained to handle:

- Long execution chains without losing coherence

- Complex instruction decomposition across multi-step tasks

- Stable tool use, function calling, and scheduled execution

- Real-time streaming responses and structured output formats

If you’re building anything autonomous—chatbots, coding agents, enterprise automation pipelines—GLM-5-Turbo deserves serious evaluation.

GLM-5 vs GLM-5-Turbo: What’s the Difference?

Understanding the relationship between the two models makes deployment decisions much easier.

| Feature | GLM-5 | GLM-5-Turbo |

| Primary use | Complex systems engineering, research | Agent workflows, OpenClaw, fast automation |

| Context window | 200K tokens | 204,800 tokens |

| Max output | 128K tokens | 131,072 tokens |

| Inference speed | 62+ tokens/sec (median) | Optimized for low-latency streaming |

| Reasoning mode | Yes (thinking mode) | Yes |

| Tool calling | Yes | Yes (enhanced) |

| Ideal for | Deep reasoning, SWE tasks, research | Agent pipelines, OpenClaw, enterprise |

Bottom line: Use GLM-5 when you need maximum reasoning depth. Use GLM-5-Turbo when you’re building production agent systems that require speed and reliability at scale.

What Makes GLM-5 So Powerful (The Foundation Matters)

To understand why GLM-5-Turbo is compelling, you need to understand the foundation it’s built on.

Architecture at a Glance

GLM-5 is a Mixture of Experts (MoE) model with 744 billion total parameters—but only 40 billion are active during any single inference pass. This is the key to its efficiency. More specifically:

- 744B total parameters / 40B active — roughly twice the scale of GLM-4.5

- DeepSeek Sparse Attention (DSA) integration — dramatically cuts deployment costs while preserving full long-context performance

- 28.5 trillion training tokens — up from 23T in the previous generation

- “Slime” async RL infrastructure — a novel reinforcement learning system that enables more precise post-training iterations

It was trained entirely on Huawei Ascend chips using MindSpore—a significant milestone in China’s push for AI hardware independence.

Benchmark Performance

GLM-5 doesn’t just benchmark well. It benchmarks at the frontier.

Coding & Engineering:

- 77.8% on SWE-bench Verified (open-source SOTA)

- 73.3% on SWE-bench Multilingual

- 56.2 on Terminal-Bench 2.0 (surpassing Gemini 3 Pro)

Reasoning & Math:

- 92.7% on AIME 2026 I

- 86.0% on GPQA-Diamond

Agentic Tasks:

- 62.0 on BrowseComp (web-scale retrieval and synthesis)

- Top open-model ranking on MCP-Atlas and τ²-Bench

In software engineering tasks, GLM-5 approaches Claude Opus 4.5-level performance while remaining open-weight and significantly cheaper.

What Is GLM-5-Turbo Actually Good At?

GLM-5-Turbo is specifically optimized for agent-driven environments—situations where AI must not just generate a response but act across multiple steps, tools, and time horizons.

1. Long-Chain Task Execution

Most LLMs degrade in quality after 10–15 tool calls. GLM-5-Turbo is engineered to stay coherent across extended execution chains—making it reliable for workflows that span dozens of sequential actions.

2. Tool Use & Function Calling

The model handles function calling with high accuracy, a critical requirement for agent systems. Whether invoking shell commands, querying APIs, or processing database outputs, GLM-5-Turbo executes with fewer syntax errors than general-purpose models.

3. Real-Time Streaming

Unlike batch-mode models, GLM-5-Turbo supports real-time streaming responses—essential for conversational agents where latency directly affects user experience.

4. Structured Output

Need JSON? Specific schemas? GLM-5-Turbo produces structured output reliably, reducing the need for post-processing layers in your pipeline.

5. Enterprise System Integration

The model integrates with external toolsets and data sources out of the box, making it straightforward to embed into CRMs, ERPs, or custom business platforms.

How to Use GLM-5-Turbo: Step-by-Step

Option A: Via the Z.ai API (Direct)

Step 1: Sign up at z.ai and create an API key in the API Keys management page.

Step 2: Make sure you’ve subscribed to the GLM Coding Plan (plans start at $10/month).

Step 3: Call the model using a standard OpenAI-compatible API format:

from openai import OpenAI

client = OpenAI(

api_key=”YOUR_ZAI_API_KEY”,

base_url=”https://open.bigmodel.cn/api/paas/v4/”

)

response = client.chat.completions.create(

model=”glm-5-turbo”,

messages=[

{“role”: “user”, “content”: “Refactor this Python function for production use…”}

]

)

print(response.choices[0].message.content)

Step 4: Enable streaming for agent workflows by adding stream=True to your request.

Option B: Via OpenRouter

GLM-5-Turbo is accessible through OpenRouter at the model ID z-ai/glm-5-turbo. This is ideal if you’re already using OpenRouter for multi-provider routing.

Pricing on OpenRouter:

- Input: competitive per-million-token rates

- Output: optimized for agent workloads

Option C: Inside OpenClaw (Recommended for Agent Builders)

OpenClaw is the primary agent framework GLM-5-Turbo was built for. Here’s how to configure it:

Step 1: Install OpenClaw via the official installer:

# macOS/Linux

curl -fsSL https://openclaw.ai/install.sh | sh

Step 2: Run the configuration wizard:

openclaw config

Select Z.AI as the model/auth provider and paste your API key.

Step 3: Add GLM-5-Turbo to your ~/.openclaw/openclaw.json:

{

“models”: {

“providers”: {

“zai”: {

“models”: [

{

“id”: “glm-5-turbo”,

“name”: “GLM-5-Turbo”,

“reasoning”: true,

“contextWindow”: 204800,

“maxTokens”: 131072

}

]

}

}

},

“agents”: {

“defaults”: {

“model”: {

“primary”: “zai/glm-5-turbo”,

“fallbacks”: [“zai/glm-5”, “zai/glm-4.7”]

}

}

}

}

Step 4: Restart the gateway and start chatting:

openclaw gateway restart

openclaw tui

You’ll see GLM-5-Turbo active in the terminal UI, ready for agent tasks.

GLM-5-Turbo vs the Competition

How does it stack up against the models developers actually use?

GLM-5-Turbo vs Claude Opus 4.5

| Metric | GLM-5-Turbo | Claude Opus 4.5 |

| SWE-bench Verified | ~77.8% | 80.9% |

| Open-weight | ✅ Yes | ❌ No |

| API pricing | ~$1/M input | $15/M input |

| Context window | 200K | 200K |

| OpenClaw native | ✅ Yes | Via proxy |

Claude Opus 4.5 holds a ~3-point edge on coding benchmarks. But GLM-5-Turbo costs approximately 93% less per million tokens. For teams running high-volume agent workloads, that cost gap is decisive.

GLM-5-Turbo vs GPT-4 Turbo

GLM-5 is roughly 9.5x cheaper than GPT-4 Turbo for input/output tokens, while offering a larger context window (200K vs 128K). For most agent use cases, the performance gap is negligible relative to the cost difference.

GLM-5-Turbo vs DeepSeek R1

DeepSeek R1 is the go-to for raw cost efficiency (~96% cheaper than proprietary models). GLM-5-Turbo trades some of that cost advantage for superior agentic reliability—specifically better tool-call stability and instruction-following in long chains.

The honest verdict: GLM-5-Turbo is the right choice if you’re building production-grade agent systems that require consistent multi-step execution. For pure reasoning tasks with tight budgets, DeepSeek R1 competes well.

Real-World Use Cases

1. Autonomous Coding Agent

Connect GLM-5-Turbo to OpenClaw with terminal access. Give it a GitHub issue. Watch it read the codebase, write a fix, run tests, and submit a PR—with minimal human input. This mirrors the workflow it was benchmarked on.

2. Enterprise Automation

GLM-5-Turbo integrates directly with external toolsets and data sources. Practical applications include:

- Extracting structured data from contracts and financial reports

- Automating customer service ticket triage and risk identification

- Translating formal texts into professional target-language output

3. Multi-Platform AI Assistant

Using OpenClaw channels, GLM-5-Turbo can power assistants across Telegram, Discord, Slack, and WhatsApp simultaneously—all routed through a single agent configuration.

4. Intelligent Model Routing

In high-load environments, you can configure GLM-5-Turbo as the primary model with GLM-4.7 and GLM-4.6 as fallbacks. This ensures reliability without a hard dependency on any single model version.

Pricing & Access Summary

| Access Method | Input Price | Output Price | Notes |

| Z.ai direct API | ~$1.00/M tokens | ~$3.20/M tokens | Requires Coding Plan subscription |

| OpenRouter | $0.72/M tokens | $2.30/M tokens | Via z-ai/glm-5-turbo |

| DeepInfra | $0.80/M tokens | $2.56/M tokens | Fastest affordable provider |

| Novita AI | $1.00/M tokens | $3.20/M tokens | Free context caching at $0.20/M |

| Fireworks | Higher | Higher | Top speed: 212.8 t/s |

GLM-5-Turbo is included in the GLM Coding Plan, which provides integrated access across OpenClaw, Claude Code via LiteLLM proxy, Kilo Code, and other agentic IDEs.

Known Limitations

Being objective matters. Here are the real constraints:

- Hardware costs for self-hosting: Running the full GLM-5 base model requires approximately 1,490GB of GPU memory—accessible only to well-funded teams. The API route bypasses this.

- Benchmark vs. real-world gap: GLM-5-Turbo excels at structured agentic tasks. It’s less differentiated for open-ended creative or conversational use cases where Claude and GPT-4o have more tuning.

- OpenClaw priority: Under high API load, OpenClaw tasks may trigger fair-use policies (dynamic queuing, rate limiting) as coding agent tasks take preemption priority.

- Not fully multimodal: GLM-5-Turbo handles text natively. Vision capabilities require the GLM-4.6V or GLM-5V series.

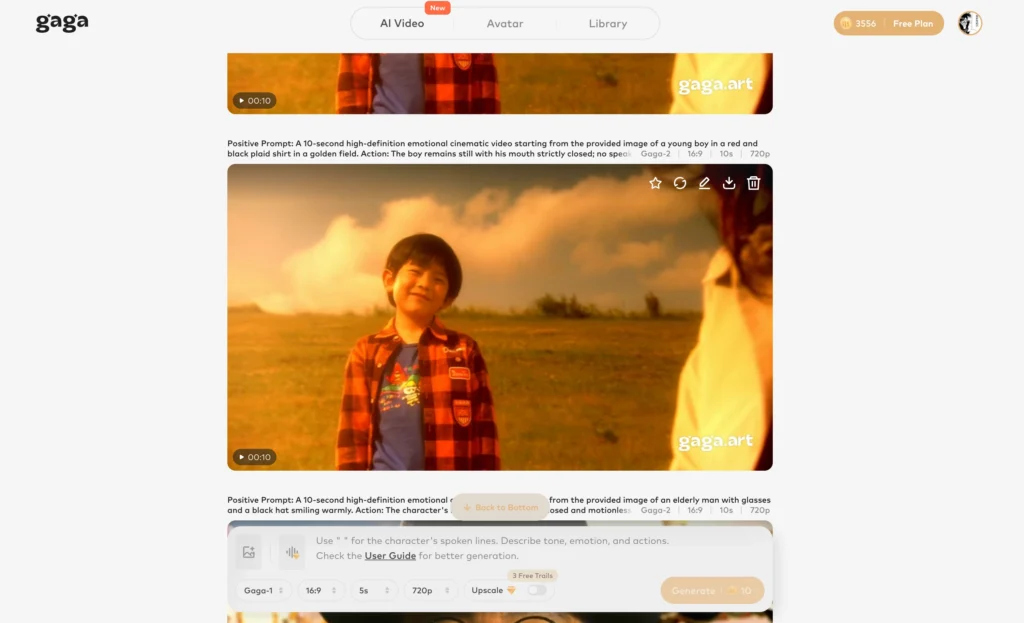

Bonus: Gaga AI — The AI Video Platform Worth Knowing

If you’re building AI-powered content pipelines or just need to create compelling video without a production team, Gaga AI (gaga.art) is the tool that keeps coming up.

Developed by Sand.ai, Gaga AI is an all-in-one video creation platform built on the GAGA-1 model—a unified system that generates video and audio simultaneously, unlike platforms that treat voice and visuals as separate problems.

What Gaga AI Can Do

Image to Video AI

Upload any photo, write a prompt, and Gaga AI animates it into a smooth, expressive video clip. The GAGA-1 model focuses on emotion-driven performance—natural gestures, micro-expressions, and realistic body language, not just motion blur over a still image. Most 10-second videos generate in 3–4 minutes.

Video & Audio Infusion

Gaga AI’s audio infusion tool lets you sync custom soundtracks, ambient audio, or AI-generated music to your video timeline. The AI reads visual beats and motion cues to match audio timing automatically—no manual keyframing needed.

AI Avatar

Create a hyper-realistic presenter avatar from a single photo. The avatar supports multiple visual styles (realistic, cartoon, cinematic), multiple languages, and full emotional range. Use cases range from product demos and training videos to faceless YouTube channels and multilingual marketing.

AI Voice Clone

Gaga AI can clone any voice from as little as 15 seconds of sample audio—preserving pitch, accent, cadence, and tonal quality. The cloned voice replicates naturally across any script, making it ideal for brand consistency or creators who want every video to sound authentically like themselves.

Text-to-Speech (TTS)

For users without a voice sample, Gaga AI’s TTS engine offers pre-built voices across genders, accents, and emotional tones—with SSML-style controls for pauses, emphasis, and speaking rate directly in the script editor.

How to Get Started with Gaga AI

- Sign up free at gaga.art — no credit card required for the trial tier

- Choose your creation mode: Image to Video, AI Avatar, or Voice Clone

- Upload your source material: a photo (JPG/PNG, ideally 1080×1920 for vertical or 1920×1080 for horizontal)

- Add your script or audio: type text for TTS, upload a voice sample, or import an existing audio track

- Generate and export: preview your video, then export in high-quality format

Free-tier outputs include a watermark. Paid plans unlock watermark-free exports and full commercial licensing rights.

Why It Pairs Well with AI Agent Workflows

If you’re already using GLM-5-Turbo to automate content generation, Gaga AI closes the loop on the video production side. GLM-5-Turbo can write scripts, draft copy, and structure content. Gaga AI can turn that output into polished video with a branded avatar and cloned voice—all without a camera, studio, or editing team.

FAQ: GLM-5-Turbo

What is GLM-5-Turbo?

GLM-5-Turbo is a fast-inference language model from Z.ai (Zhipu AI), optimized for agent-driven workflows like OpenClaw. It handles long-chain task execution, tool use, and structured outputs with better stability than general-purpose models at similar price points.

How is GLM-5-Turbo different from GLM-5?

GLM-5 is the flagship foundation model designed for deep reasoning and complex system engineering. GLM-5-Turbo is a variant fine-tuned for speed and reliability in agent environments—prioritizing low-latency streaming, instruction following, and tool-call stability over raw reasoning depth.

Is GLM-5-Turbo free to use?

GLM-5-Turbo requires a Z.ai API key and a GLM Coding Plan subscription (starting at $10/month). It is also available on OpenRouter and other third-party providers with pay-per-token pricing.

What context window does GLM-5-Turbo support?

GLM-5-Turbo supports a 204,800-token context window with a maximum output of 131,072 tokens—suitable for processing large codebases, long documents, and extended multi-turn agent sessions.

Can I use GLM-5-Turbo in Claude Code?

Yes. GLM-5-Turbo can be proxied into Claude Code via a LiteLLM gateway, making it an OpenAI-compatible endpoint that Claude Code treats as a drop-in backend.

How does GLM-5-Turbo compare to Claude Opus 4.5 for coding?

GLM-5 scores 77.8% on SWE-bench Verified compared to Claude Opus 4.5’s 80.9%. The performance gap is roughly 3 percentage points, but GLM-5-Turbo costs approximately 93% less per million tokens, making it highly competitive for high-volume coding agent deployments.

Is GLM-5 open-source?

Yes. GLM-5 is open-weight, available on Hugging Face under a permissive license. Note that running the full model locally requires significant hardware (approximately 1,490GB of GPU memory for BF16 precision). Cloud API access is the practical path for most teams.

What is OpenClaw?

OpenClaw is an open-source AI agent framework that connects large language models to communication channels (Telegram, Discord, Slack, iMessage, etc.) and tools. GLM-5-Turbo was specifically trained and optimized for OpenClaw scenarios, making it the recommended model within that ecosystem.

What kind of tasks is GLM-5-Turbo NOT ideal for?

GLM-5-Turbo is text-only in this configuration. For vision or multimodal tasks, use GLM-4.6V or GLM-5V. For pure creative writing or conversational tasks without agentic requirements, general-purpose models with heavier instruction tuning may perform better.

Where can I access GLM-5-Turbo today?

Via Z.ai’s platform (docs.z.ai), OpenRouter (z-ai/glm-5-turbo), Novita AI, DeepInfra, Fireworks, and several other third-party API providers. For local deployment, FP8 weights are available on Hugging Face at zai-org/GLM-5-FP8.