Key Takeaways

- Veo 3.2 (codenamed “Snowbunny,” powered by the Artemis engine) has leaked into Google’s internal services and is expected to roll out to Workspace soon.

- Rumored upgrades include 30-second native generation, true 4K output, and semantically-aware audio with near-perfect lip-sync.

- Veo 3.2 faces stiff competition from Seedance 2.0, which rivals it on workflow control.

- You can access current Veo 3.1 capabilities today via platforms like SuperMaker AI.

Table of Contents

What Is Google Veo 3.2?

Google Veo 3.2 is the next iteration of Google DeepMind’s flagship AI video generation model, following Veo 3 and Veo 3.1. Based on reliable leaks from January 2026, Veo 3.2 is already running inside select Google services and is expected to land in Google Workspace imminently.

This isn’t a routine patch. Internally codenamed “Snowbunny” and powered by a new Artemis engine, Veo 3.2 appears designed to be a significant leap in physical realism, native audio-video fusion, and output quality.

How Did Veo Evolve to Get Here?

Understanding Veo 3.2 requires a quick look at the model’s history:

Veo 3 (mid-2025)

- Introduced native audio generation—synchronized dialogue, sound effects, ambient noise

- Major leap in physics simulation and human motion realism

- The “talkies” moment for AI video

Veo 3.1 (late 2025–early 2026)

- Added support for vertical (9:16) formats for YouTube Shorts and TikTok

- Enhanced “Ingredients to Video” mode for reference-image consistency

- Native 1080p with upscaled 4K options

- Deeper integration across Gemini, Google Flow, YouTube Create, and Vertex AI

Veo 3.2 (leaked, expected 2026)

- Codenamed Snowbunny; Artemis engine

- Spotted in internal API logs and third-party evaluation platforms like Artificial Analysis

- Flagged by reliable Google leaker @bedros_p on January 18, 2026: “Veo 3.2 has made its way into some services — to be added to Workspace.”

What Are the Rumored Veo 3.2 Upgrades?

1. 30-Second Native Generation

Veo 3.2 reportedly uses Enhanced Spacetime Patches to push native single-shot generation from the current 8-second limit to a full 30 seconds. This is one of the most-requested improvements from creators and would unlock genuine short-film storytelling in a single prompt.

2. Semantic Audio (“Sounds That Make Sense”)

Instead of layering generic sound effects after the fact, Veo 3.2 is said to natively compute contextually accurate audio. Example: a character walking on snow generates a precise crunching sound. The model also reportedly supports near-perfect lip-sync for multi-speaker dialogue.

3. True 4K Output (AI Detail Reconstruction)

Rather than upscaling a lower-resolution base, Veo 3.2’s AI reportedly redraws individual details—raindrops, skin pores, hair strands—at a native 4K level. This is a fundamentally different architecture from post-processing upscale.

4. Google Workspace Integration

The leaked internal message specifically mentions Workspace as the next deployment target. Likely entry points include Google Vids, Slides, and Docs—meaning teams could generate video content directly inside presentations or training materials.

5. Other Speculated Improvements

- Faster “Veo 3 Fast” generation modes for low-latency previews

- Better character/object persistence across multi-shot sequences

- Tighter integration with Android XR and Gemini 3 reasoning

How Does Veo 3.2 Compare to Competitors?

The AI video space is more competitive than ever. Here’s how Veo 3.2 stacks up:

| Feature | Veo 3.2 (rumored) | Seedance 2.0 | Grok-Imagine (Musk claim) |

| Native generation length | 30 sec | ~10–15 sec | Not confirmed |

| Audio quality | Semantic, lip-sync | Strong | Not confirmed |

| Output resolution | True 4K | 1080p upscale | Not confirmed |

| Workflow control | Moderate | Excellent | Unknown |

| Ecosystem integration | Google Workspace | Standalone | X/xAI apps |

The key distinction: Veo 3.2 aims to be a virtual physics engine—obsessively accurate in how light, motion, and sound behave in the real world. Seedance 2.0 takes the opposite approach: a virtual film studio, giving creators camera angles, lighting control, and continuity tools. Neither is objectively better—they serve different creative philosophies.

How to Access Veo Today (Before 3.2 Drops)

Veo 3.2 isn’t publicly available yet, but Veo 3.1 is accessible through multiple channels right now:

- Gemini app (Pro/Advanced) – Consumer-friendly access with free trial options

- Google Flow – Google’s dedicated AI filmmaking tool for more advanced use

- Vertex AI / Gemini API – Developer and enterprise access (~$0.20–$0.60/sec depending on resolution and audio)

- YouTube Create / Shorts – Embedded Veo tools for short-form creators

- SuperMaker AI – Third-party platform with Veo integration, great for testing capabilities without a Google account

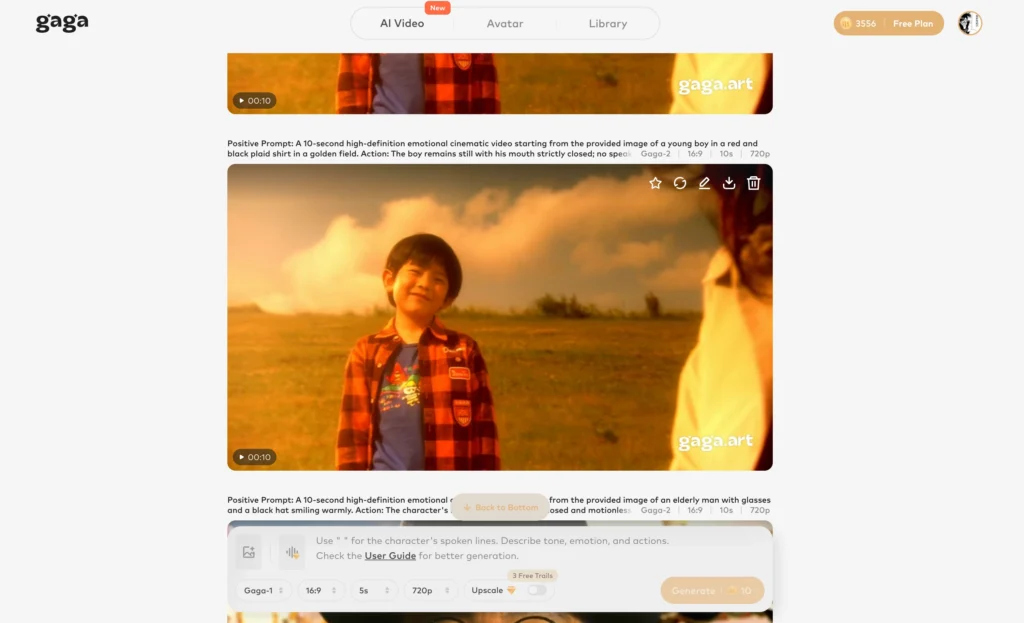

Bonus: Gaga AI — The All-in-One Video Generator Worth Knowing

While waiting for Veo 3.2, it’s worth exploring Gaga AI, a rising AI video platform that bundles several powerful capabilities in one place:

Image to Video AI

Upload a static image and let Gaga animate it into a fluid, realistic video clip. Useful for turning product photos, illustrations, or stills into engaging content without a full shoot.

Video and Audio Infusion

Gaga AI lets you infuse new audio—music, voiceover, or ambient sound—directly into existing video. No separate editing software needed. The sync is handled automatically.

AI Avatar

Generate a photorealistic or stylized on-screen presenter from scratch. Useful for explainer videos, branded content, or training materials where you don’t want to appear on camera.

AI Voice Clone

Record a short voice sample and Gaga AI clones it. You can then generate unlimited voiceover from text in your own voice—no re-recording required.

Text-to-Speech (TTS)

Gaga’s TTS engine supports natural, expressive speech across multiple languages and tones. Choose from a library of voices or use your cloned voice for consistent brand narration.

Bottom line: If Veo 3.2 is the high-end physics engine for AI video, Gaga AI is the versatile studio toolkit—especially strong for creators who need avatars, voice, and image-to-video in one workflow.

FAQ

What is Google Veo 3.2?

Veo 3.2 is Google DeepMind’s next AI video generation model, codenamed Snowbunny and powered by the Artemis engine. It has leaked into internal Google services as of January 2026 and is expected to roll out publicly to Google Workspace soon.

When will Veo 3.2 be released?

No official date has been announced. Based on the January 18, 2026 leak from @bedros_p and Google’s typical rollout pattern (internal testing → select users → API → public announcement), a release could come within weeks of the leak.

How long can Veo 3.2 generate video?

Rumored upgrades suggest up to 30 seconds of native single-shot generation, up from the current 8-second limit in Veo 3.1.

Will Veo 3.2 support 4K video?

Yes, according to leaks. Unlike Veo 3.1’s upscaled approach, 3.2 reportedly uses AI to reconstruct fine details—hair, pores, raindrops—at a native 4K level.

How is Veo 3.2 different from Veo 3.1?

Veo 3.1 added vertical formats, improved reference-image consistency, and 1080p support. Veo 3.2 goes further with 30-second generation, semantic audio with lip-sync, true 4K output, and Workspace integration.

Can I access Veo 3.2 today?

Not yet publicly. You can access Veo 3.1 through the Gemini app, Google Flow, Vertex AI, or SuperMaker AI while you wait.

Is Veo 3.2 better than Seedance 2.0?

They serve different needs. Veo 3.2 prioritizes physical realism and audio accuracy. Seedance 2.0 focuses on creative control and workflow continuity. Neither is universally “better”—choice depends on your production goals.

What is Gaga AI?

Gaga AI is an all-in-one AI video platform offering image-to-video generation, audio infusion, AI avatars, voice cloning, and text-to-speech—a strong option for creators needing a full video toolkit in one place.