Key Takeaways

- SkyReels-V4 is the first video foundation model to simultaneously support multimodal input (text, images, video, audio), joint video–audio generation, and a unified generation/inpainting/editing framework.

- It uses a dual-stream MMDiT architecture — one branch for video, one for audio — sharing a Multimodal Large Language Model (MLLM) encoder.

- Output quality reaches 1080p, 32 FPS, up to 15 seconds with synchronized audio — cinema-level by AI standards.

- It currently ranks #2 on the Artificial Analysis Video Arena leaderboard (as of February 2026), outperforming Kling 2.6, Sora-2, Wan 2.6, and others in human evaluation.

- Four headline capabilities: multimodal precision control, professional video inpainting, full-dimension video editing, and high-quality audio generation.

Table of Contents

What Is SkyReels-V4?

SkyReels-V4 is a unified multi-modal video foundation model developed by the SkyReels Team at Skywork AI (Kunlun). Released in February 2026, it is designed to jointly generate video and audio from a wide range of inputs — text, images, video clips, masks, and audio references — in a single unified pipeline.

Before SkyReels-V4, the market was fragmented: some models handled video generation, others did audio-driven animation, and editing tools were separate entirely. SkyReels-V4 collapses all of these into one architecture. Think of it as the difference between a full film production studio and a single all-in-one creative platform.

The research paper (arXiv:2602.21818) introduces it as, to the team’s knowledge, the first model worldwide to simultaneously unify:

- Rich multimodal inputs

- Joint video–audio generation

- Generation, inpainting, and editing under one framework

Why SkyReels-V4 Matters: The Problem It Solves

The AI video generation space has had three persistent pain points:

No native audio. Most video models produce silent clips. Syncing audio afterward is time-consuming and prone to mismatches.

Single-modal inputs only. Most models only accept text prompts. Adding a reference image or audio clip? Not supported.

Quality vs. speed tradeoff. High-resolution generation is slow and memory-intensive; fast generation means low quality.

SkyReels-V4 addresses all three directly. Its dual-stream architecture generates audio natively alongside video. Its MLLM-based encoder processes text, images, videos, and audio together. And its efficiency strategy — joint low-resolution / high-resolution keyframe generation with a dedicated Refiner module — makes 1080p, 32 FPS, 15-second generation computationally feasible.

How SkyReels-V4 Works: The Architecture

Dual-Stream MMDiT: Video and Audio as Native Equals

At the core of SkyReels-V4 is a dual-stream Multimodal Diffusion Transformer (MMDiT). Two branches run in parallel:

- Video branch — synthesizes visual content

- Audio branch — generates temporally aligned audio

Both branches share a single frozen MLLM text encoder, which processes a combined prompt containing both visual and acoustic descriptions. This unified semantic context is what allows the model to understand instructions like: “generate a scene where a woman walks through a rainy street, with the sound of footsteps and distant thunder.”

Each transformer block includes bidirectional cross-attention between the two branches. The audio stream attends to video features; the video stream attends back to audio features. This bi-directional exchange happens throughout the entire network, ensuring tight audio-visual synchronization — not just a surface-level match.

To align temporal scales (video latents span 21 frames; audio latents contain over 200,000 tokens at 44.1 kHz), the model applies Rotary Positional Embeddings (RoPE) with frequency scaling, ensuring both modalities reference the same timeline.

Unified Inpainting via Channel Concatenation

On the video side, SkyReels-V4 uses a clever channel concatenation formulation to handle every generation task as a variant of inpainting:

- Text-to-video: all frames are generated (mask = 0 everywhere)

- Image-to-video: first frame is conditioned; rest generated

- Video extension: first k frames are conditioned; rest generated

- Video editing: specific regions are masked for modification; rest preserved

This single unified interface means the same model handles generation, extension, and editing without task-specific branches or separate models.

Multi-Modal In-Context Learning

Reference images and video clips are injected directly into the video self-attention mechanism via temporal concatenation. They receive negative temporal indices (a positional trick) so the model understands these frames are “context” rather than “content to generate.” This lets the model extract fine-grained visual patterns — identity features, textures, pose variations — from reference material and carry them into the generation.

The Refiner: Speed Without Sacrificing Quality

To avoid the quadratic cost of full 1080p attention computation, SkyReels-V4 uses a two-stage efficiency strategy:

- The base model generates a low-resolution full sequence plus high-resolution keyframes.

- A dedicated Refiner module — using Video Sparse Attention (VSA), which cuts attention computation by approximately 3× — performs super-resolution and frame interpolation to produce the final high-fidelity output.

The Four Core Capabilities of SkyReels-V4

1. Multimodal Precision Control

SkyReels-V4 accepts any combination of text, images, video clips, masks, and audio references in a single prompt. The MLLM encoder understands complex compositional instructions that reference multiple assets simultaneously.

A demonstrated example: replacing the human dancers in a Pulp Fiction clip with a dog and a cat (using two reference images), while preserving the original choreography, music, and background — with the animals’ movements precisely matching the beat of the original song. The model retained fur color and body proportions from the reference images while inheriting motion timing from the video.

This reflects three underlying capabilities:

- Reference-based style transfer and subject preservation — visual attributes (color, body shape) are extracted from reference images and applied to video

- Audio-driven motion generation — the background music in the reference video informs the timing and rhythm of movement

- Multi-reference fusion — multiple conditioning sources (images, video, audio) are processed together without conflict

2. Professional Video Inpainting

SkyReels-V4 supports surgical edits to existing video content:

- Regional inpainting: replace subjects, modify attributes (clothing color, object shape), swap backgrounds

- Element removal: remove watermarks, subtitles, logos — with natural background reconstruction

- Reference-guided restoration: maintain visual consistency before and after edits using a reference image

In a demonstrated example, a 10-second video clip with heavy English subtitle overlays was processed to completely remove all text, leaving the footage clean and unaltered in all other respects. This is the kind of task that traditionally requires specialized tools and frame-by-frame manual work.

3. Full-Dimension Video Editing

Beyond surgical inpainting, SkyReels-V4 supports creative transformations:

- Element insertion: Add a specific hat from a reference image onto a specific dancer in a K-pop practice video — correct color, correct placement, consistent across frames

- Element deletion: Remove specific named individuals from a scene; the background is reconstructed coherently

- Global style transfer: Transform the entire visual aesthetic of a video (e.g., convert a naturalistic scene to a cyberpunk cityscape)

- Camera motion control: Change the cinematography of a scene — from a static shot to a cinematic push-in or pan

The distinction from inpainting: inpainting preserves structure and makes targeted fixes; full-dimension editing can change the entire meaning and visual intent of a video.

4. High-Quality Audio Generation

SkyReels-V4 includes a native audio generation branch that supports:

- Multilingual speech synthesis with emotional expressiveness

- Sound effect generation (footsteps, impacts, ambient sounds — with realistic room acoustics)

- Background music generation and adaptation

- Lyric-synchronized singing

- Audio reference conditioning — provide a speech sample or musical theme and the model uses it to guide generation

In a demonstrated short drama clip, the model generated dialogue with distinct emotional tones (playful, urgent, angry), realistic table-strike sound effects with detectable wooden texture and room reverb, and speech that was clearly articulated and tonally nuanced. The audio quality was rated on par with dedicated professional audio generation tools for signal clarity, timbre realism, and dynamic range.

SkyReels-V4 Performance: How It Benchmarks

Artificial Analysis Video Arena

SkyReels-V4 was evaluated on the Artificial Analysis Video Arena in the text-to-video-with-audio track, scored through public pairwise comparisons. As of February 25, 2026, it ranks #2 overall, competing against Veo 3.1, Kling 3.0, Sora-2, Vidu-Q3, and Wan 2.6.

SkyReels-VABench Human Evaluation

The team introduced SkyReels-VABench, a new benchmark with 2,000+ prompts evaluated by 50 professional evaluators across five dimensions: Instruction Following, Audio-Visual Synchronization, Visual Quality, Motion Quality, and Audio Quality.

Results (5-point Likert scale):

- Highest overall average score among all competing models

- Strongest in: Prompt Following and Motion Quality

- Competitive in: Visual Quality, Audio-Visual Synchronization, Audio Quality

In pairwise Good-Same-Bad comparisons, SkyReels-V4 receives a higher proportion of “Good” ratings against every baseline: Kling 2.6, Seedance 1.5 Pro, Veo 3.1, and Wan 2.6.

SkyReels-V4 vs. Competing Models

| Feature | SkyReels-V4 | Kling 3.0 | Veo 3.1 | Sora-2 |

| Multimodal inputs (text + image + video + audio) | ✅ | Partial | Not disclosed | Not disclosed |

| Native joint video-audio generation | ✅ | ✅ | ✅ | ✅ |

| Unified inpainting + editing framework | ✅ | ❌ | ❌ | ❌ |

| Open research paper | ✅ | ❌ | ❌ | ❌ |

| Max resolution | 1080p | Not disclosed | Not disclosed | Not disclosed |

| Max duration | 15 seconds | Varies | Varies | Varies |

SkyReels-V4’s primary differentiator is its unified architecture: no competitor currently combines multimodal input, joint video-audio generation, and generation/inpainting/editing in a single open framework.

Training: How SkyReels-V4 Was Built

The model was trained in three major phases:

Video Pretrain (6 stages): Starting from text-to-image at 256px, progressively scaling to 1080p, introducing inpainting tasks, then multimodal conditioning. Data volume ranged from 3 billion images in early stages to 50 million curated items in later stages.

Audio Pretrain: The audio backbone was trained from scratch on hundreds of thousands of hours of speech and audio data at variable lengths up to 15 seconds, covering multilingual speech, sound effects, music, and singing.

Video-Audio Joint Training + SFT: The two pretrained branches were combined for joint training on T2V, T2AV, and T2A tasks. Final supervised fine-tuning used 5 million videos with multimodal conditions, concluding with 1 million manually curated high-quality videos.

This progressive “climbing” approach — low resolution to high, single modality to joint, then fine-tuned — is what makes the audio and video branches genuinely integrated rather than bolted together.

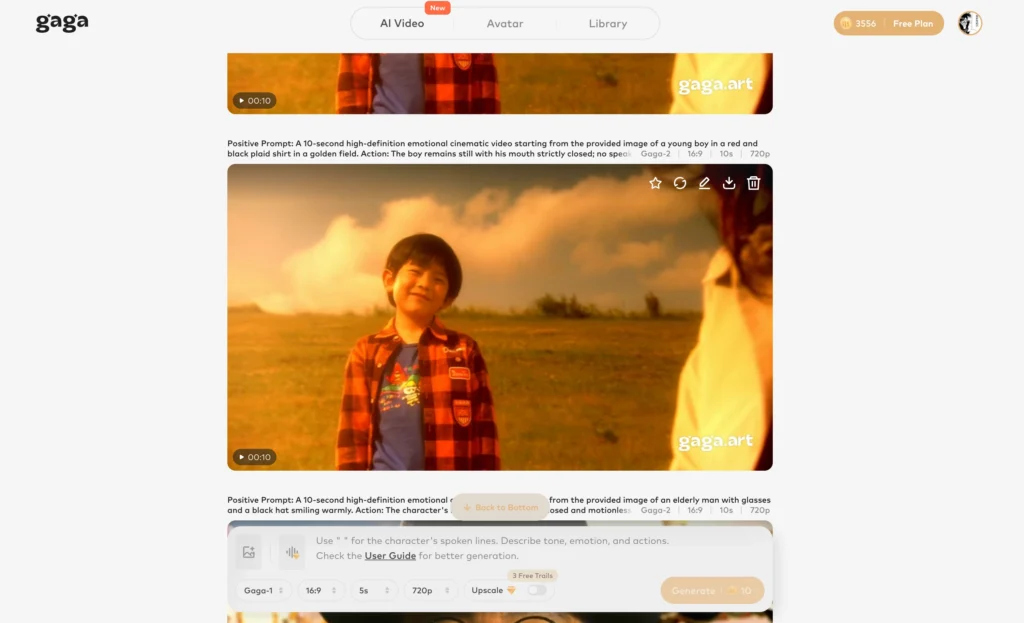

Bonus: Gaga AI — Another Powerful Toolkit for AI Video Creators

If you’re exploring AI video generation beyond research models, Gaga AI is worth your attention as a practical, production-ready toolkit. It packages several key capabilities in an accessible interface:

Image to Video AI

Gaga AI converts static images into dynamic video clips — similar to SkyReels-V4’s I2V capability — letting you animate product photos, portraits, and illustrations without any video production background.

Video and Audio Infusion

Gaga AI allows you to layer audio tracks into generated or uploaded videos, giving you synchronized sound without external editing tools. This makes it practical for content creators who need video + audio output in a single step.

AI Avatar

The platform generates realistic AI avatars that can speak, gesture, and react — useful for corporate training videos, explainers, and social content that needs a human presence without requiring on-camera talent.

AI Voice Clone

Gaga AI’s voice cloning feature replicates a speaker’s vocal characteristics from a short audio sample. This enables consistent voiceovers across long-form content, multilingual dubbing, or persona-consistent AI characters.

Text-to-Speech (TTS)

For creators who need narration without a voice actor, Gaga AI’s TTS engine produces natural-sounding speech across multiple languages and emotional registers — directly integrated into the video workflow.

Together, these features make Gaga AI a practical companion for creators who want to apply AI video and audio tools to real production scenarios today.

Frequently Asked Questions

What is SkyReels-V4?

SkyReels-V4 is a multi-modal video foundation model developed by Skywork AI. It is the first AI model to simultaneously support multimodal inputs (text, images, video, masks, audio), joint video-audio generation, and a unified framework for video generation, inpainting, and editing — all at cinematic quality up to 1080p.

What makes SkyReels-V4 different from other AI video generators?

Most AI video generators handle only one task: generate a video from text, or animate an image, or edit a clip. SkyReels-V4 does all of these within the same architecture, and it also generates synchronized audio natively — not as an afterthought. No current open competitor combines all four capabilities in a single model.

Can SkyReels-V4 generate audio automatically with video?

Yes. SkyReels-V4 includes a dedicated audio branch in its dual-stream architecture that generates speech, sound effects, and background music synchronized to video output. It also supports audio reference conditioning — provide a sample and the model uses it to guide audio generation.

What resolution and length does SkyReels-V4 support?

SkyReels-V4 supports up to 1080p resolution, 32 FPS, and 15-second video duration. This is achieved via a joint low-resolution/high-resolution keyframe generation strategy followed by a Refiner module that performs super-resolution and frame interpolation.

What inputs does SkyReels-V4 accept?

The model accepts text prompts, reference images, reference video clips, binary masks for regional editing, and audio references. These can be combined in a single instruction (e.g., “use the character from image A, performing the motion from video B, with the music style from audio C”).

How does SkyReels-V4 perform on benchmarks?

As of February 2026, SkyReels-V4 ranks #2 on the Artificial Analysis Video Arena leaderboard for text-to-video-with-audio generation. On the team’s own SkyReels-VABench human evaluation (50 professional evaluators, 2,000+ prompts), it achieves the highest overall score, with particular strength in prompt following and motion quality.

Is SkyReels-V4 open source?

The research paper (arXiv:2602.21818) is publicly available. Check the official SkyReels / Skywork AI channels for model weights and API access details, as availability may evolve post-publication.

What comes after SkyReels-V4?

The team has indicated future work targeting longer video durations, higher resolutions (4K and 8K), improved cross-language audio-visual coherence, and reduced inference cost for broader deployment.